There is a whole chunk of the Dolphin project that most users don't know about and have no interactions with. Most of the blog's articles focus on user visible features: improvements in the emulator core, or accuracy changes that allow non playable games to finally work properly. We seldom talk about how these changes come to life.

This piece will relay the effort of a few people within the Dolphin team who have been working in the shadows for the past 30 months to provide tools and infrastructure for other Dolphin contributors. From cloud based graphics rendering, bug detection, all the way to simple IRC bots, these tools have helped Dolphin become more efficient in the modern era.

Motivation: Dolphin 3.0 and the start of the Git era¶

Before Dolphin 3.0 was released, Dolphin developers used to work with a version control system called SVN (Subversion). Dolphin development was almost completely linear: versions were simple numbers like r5000, r5001, r5002, ... and each new version was based solely on its immediate predecessor. Users could download each of these development versions through an unofficial website, which was building new revisions continuously for Windows x64/x86 and OS X. There was no such thing as a Dolphin development infrastructure at the time, and very little interactions with the owner of the unofficial website handling builds.

While Dolphin 3.0 was a great change for users, it was also a revolution for our development workflow. With the release came the switch from SVN to Git, a newer and more complex version control system allowing several parallel development branches. With these branches, developers can work independently on features and bug fixes without their buggy prototypes impeding the work of other contributors. Each large change was developed as an individual branch which was then integrated (or "merged") into the main development branch.

Unfortunately, the unofficial build infrastructure that Dolphin was relying on didn't really follow these changes in the development workflow. The maintainer of that infrastructure made the required changes to continue providing builds of the main development branch, but developers were in the dark when it came to their own branches. Each developer had to provide their own builds for testers, making it very difficult for people working mainly on Linux or OS X to get any testing help. This lack of progress on the unofficial build infrastructure coincided with some long downtimes and a general absence of communication from the maintainer of that infrastructure. The straw that broke the camel's back was the increase in number of ads on the website hosting the development builds, without any of that money going into build infrastructure improvements.

Around June 2012, I (delroth) was feeling that pain along with other Dolphin contributors when I was working on bugfixes and improvements. Since I come from a system design and administration background, I decided it was time to start taking the build infrastructure problems into our own hands and start the work on an official Dolphin development infrastructure, providing the basic features that were present in the previous unofficial infrastructure, but also adding an emphasis on early bugs and regression detection (preferably before they make it to most users).

Since then, a lot of changes have happened. 3.5 and 4.0 got released. Dolphin moved from Google Code to GitHub, mandating pull requests and code reviews in the process. A new website was developed to go along with the new build infrastructure, and became the first official, Dolphin team maintained, website for the project. Efforts were driven to add more testing related features to the project.

A quick look at the current infrastructure¶

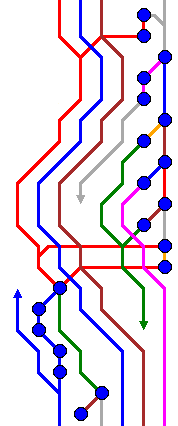

After two and a half years of development (mostly by yours truly, with small contributions from other individuals), this is the current state of the Dolphin development infrastructure, summarized in a convenient block diagram:

Let's go over some of these elements one by one.

Buildbot¶

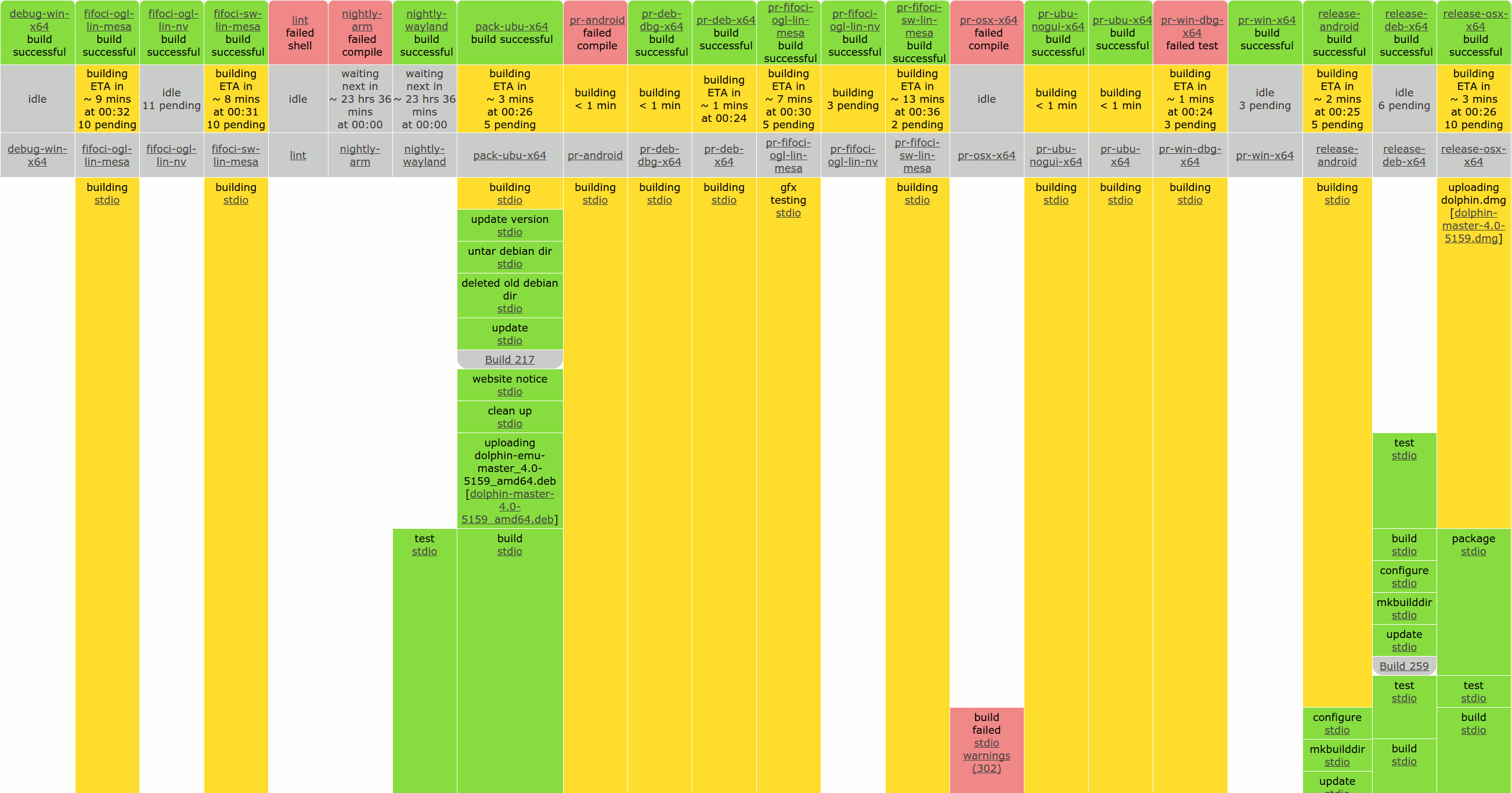

Buildbot is a central piece of our infrastructure. It is an open source project used by a large number of projects to automate builds. Dolphin uses it both as a regular continuous build system and as a more generic task runner system. For example, FifoCI (a system we're going to explain in more details later) uses Buildbot to schedule runs of graphics rendering tests.

We currently have five main different types of tasks running on Buildbot:

- Revisions pushed to the main repository (to

masteror any other branch). These are built by ourrelease-*builders. The resulting binaries (Windows x64.exe, OS X.dmg, Ubuntu.deb) are pushed to our website, where they can be seen live on the Download page. - Pull requests created or updated on our GitHub repository. These are handled

by our

pr-*builders. Binaries are also built (for Windows and OS X), but most importantly, the status of the build for the 8 configurations we test is reported back to GitHub (via central — more on that later). This allows contributors to directly see whether their pull request builds and passes unit tests on some configurations we support. It is extremely rare that we merge pull requests that are red on one of these configurations. - Nightly builds for some rarely used or experimental configurations that are

not worth testing for every single commit or pull request. We currently have

only two of these builds:

nightly-wayland(build without X11 libraries) andnightly-arm(ARMv7 builds and unittests on an ODROID U3 board). - WIP builds, which allow any registered developer to send a base revision along with a patch to the Buildbot, and get this patch built and tested for the same set of platforms we support for pull requests. Very useful for one-off tests that don't really need a pull request.

- FifoCI builds, triggered for both mainline and pull request builds. Again, more details later. Since these builds are more expensive and take longer than other types of builds, we only trigger them after we have confirmed the mainline revision or pull request can build and pass unit tests already.

We run regular build runners on 5 different servers, along with 3 runners for FifoCI related tasks. Some of these servers are owned by the Dolphin team project, but for the more annoying configurations (OS X, ARMv7) we also rely on donated hardware and servers from Dolphin developers.

The configuration we use for Dolphin's Buildbot system can be found on GitHub. It is messy and would really benefit from some large refactoring, but it does the work quite well.

Central¶

When Dolphin moved from Google Code to GitHub a year ago, the interactions between the developers and the build infrastructure started getting more complicated. Instead of having the build infrastructure interact only with revisions pushed to the main Dolphin code repository, we suddenly also needed to deal with pull requests, which are in essence interactive. This required our infrastructure to integrate with GitHub's hooks and API, and translate those requests and results. Dolphin's Central project was born from these requirements — developed to be the central notification hub which dispatches and translates events between systems to tie them all together.

Here are just a few of the event sources and targets Central currently handles:

- GitHub pull requests creation and updates.

- GitHub pull requests comments for developer-triggered actions (for example,

developers can use

@dolphin-emu-bot rebuildto manually trigger a pull request to be rebuilt). - IRC messages to post updates where developers read them.

- Buildbot, both as a source (build status) and a target (job queue, where build requests get pushed when needed).

- FifoCI, which reports its test results.

- Google Code Atom feeds for issues (still handled on Google Code at the moment).

While its initial version was hacked in a week-end to handle the new requirements of the move to GitHub, Central has since evolved and has become of the most crucial pieces of the whole infrastructure. You can find the current source code of the project on GitHub.

FifoCI¶

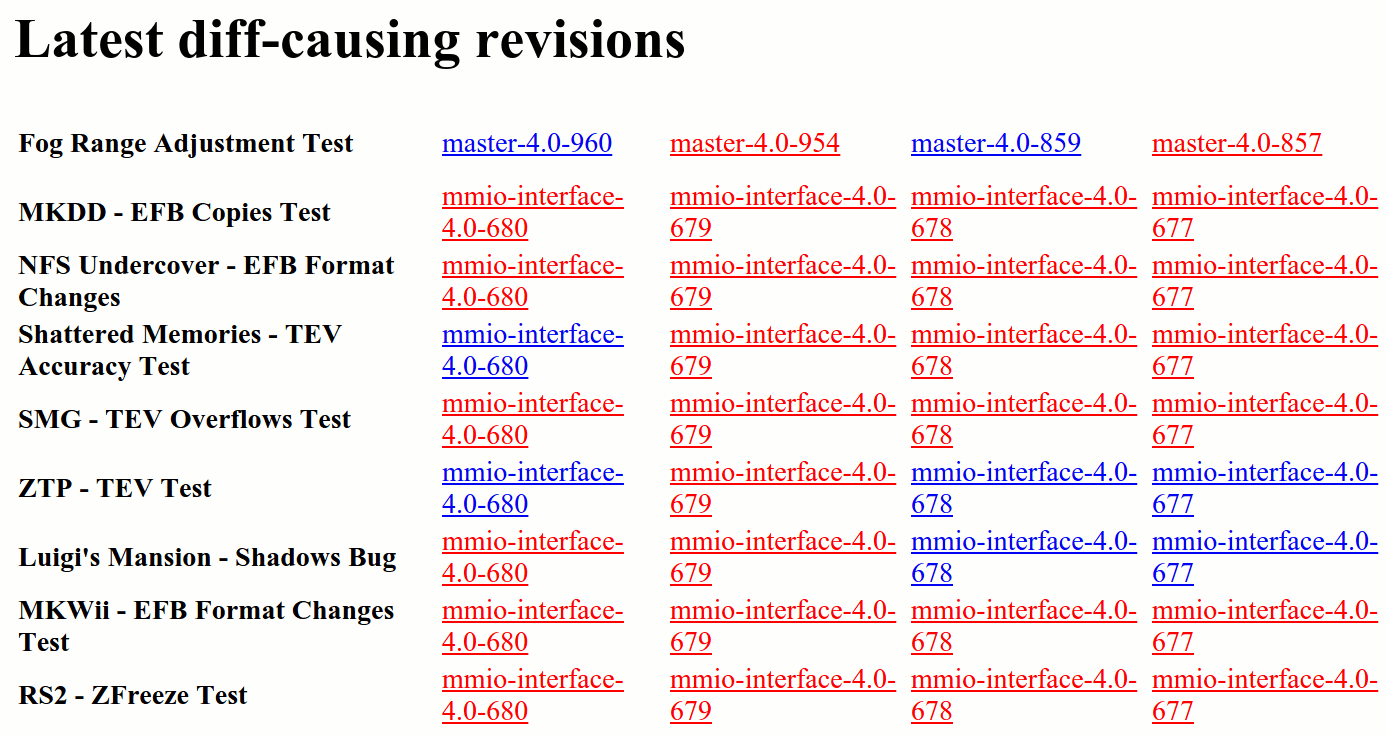

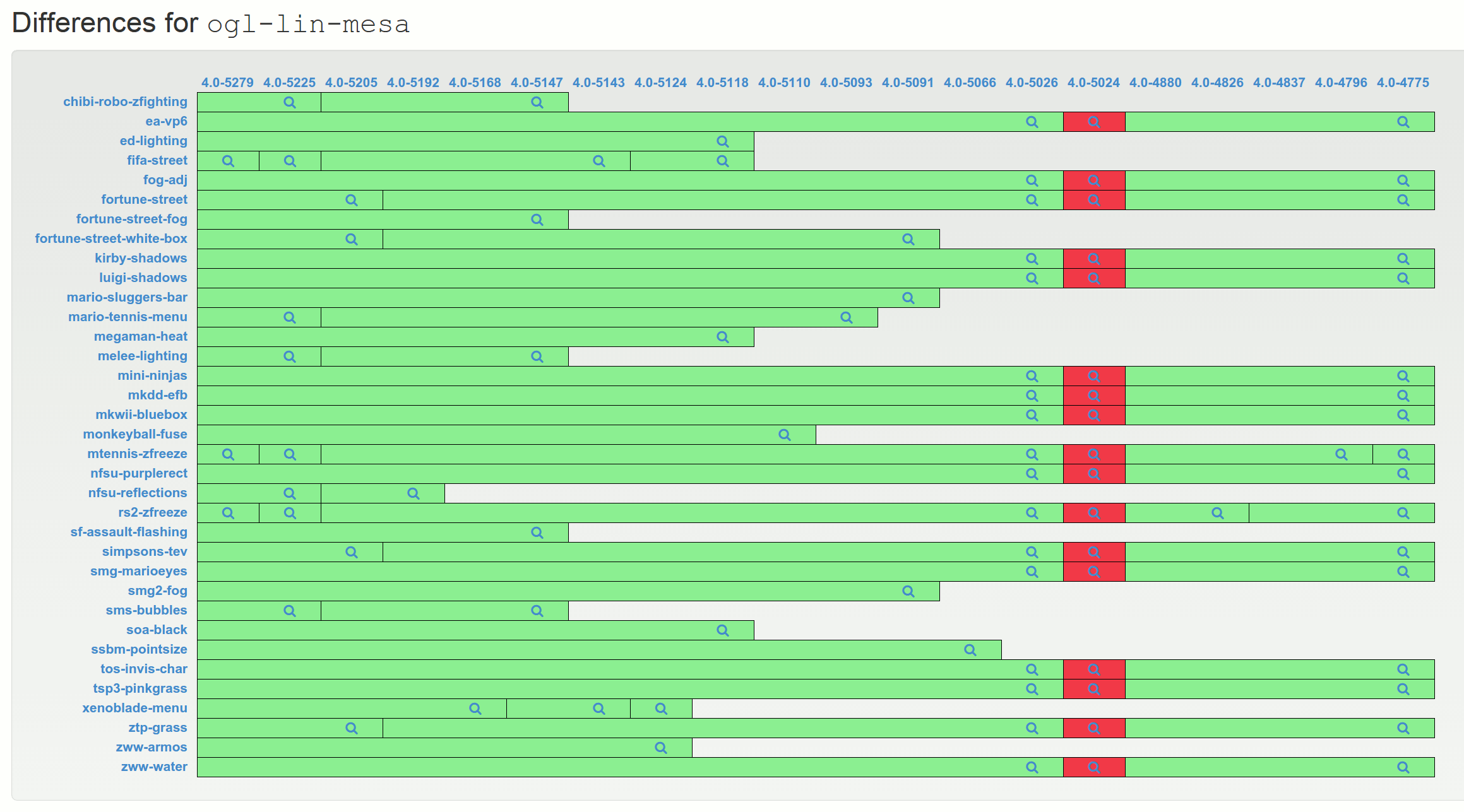

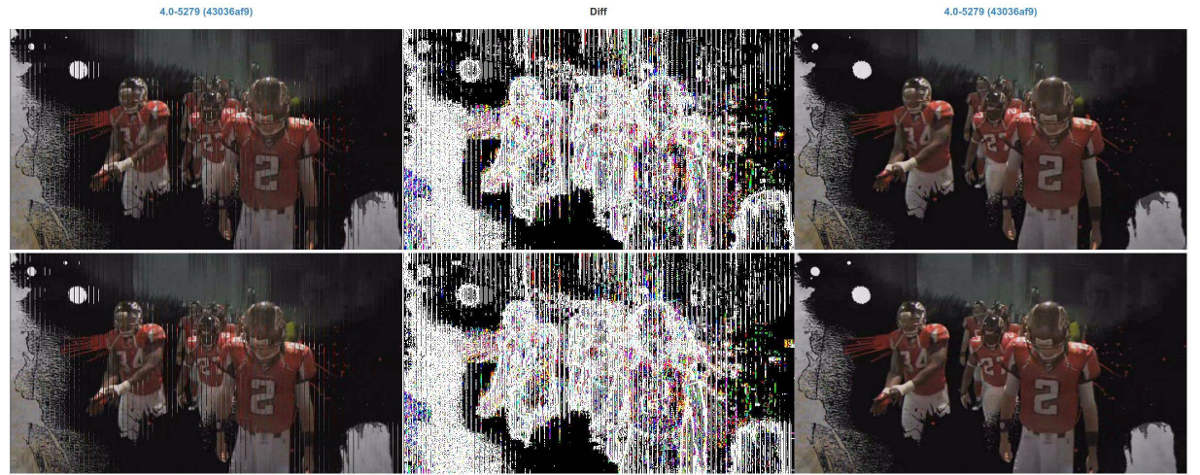

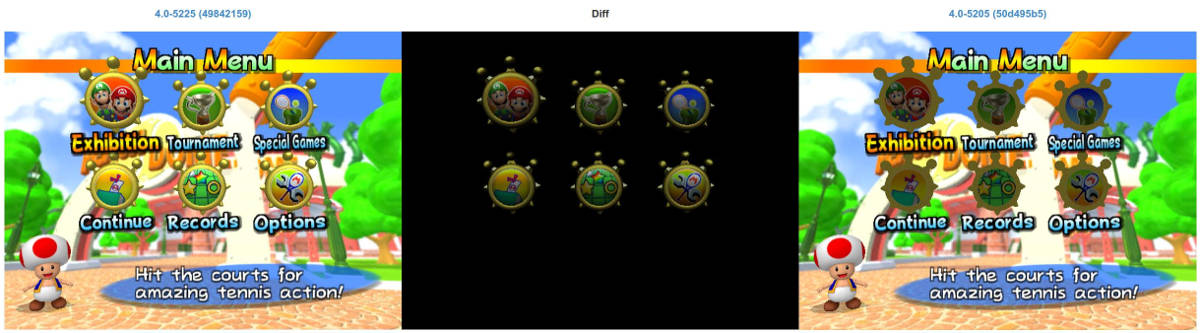

Probably the most complex and most interesting component of our custom infrastructure systems. FifoCI is an idea that came to my mind as early as August 2013 but only recently came to fruition in January 2015. The idea behind the project is simple. Dolphin supports recording the data being transmitted between the emulated CPU and emulated GPU, through a feature called "FIFO logs". It also allows replaying this data after the fact, making it possible to replay the exact same of input data and compare it on different Dolphin versions, but also on a real Wii! FifoCI, short for "FIFO logs Continuous Integration", does exactly this: for each new version of Dolphin, it compares the rendering of a set of recorded GPU commands and notifies developers when differences are shown in the rendering.

While a prototype was written a long time ago, using Mesa3D's swrast renderer in a headless Xorg server along with a terrible Python UI, it slowly died down due to the lack of time to spend on its development. Never really stable enough to be useful, it died a slow death before disappearing completely in a server move.

Almost a year after the first prototype was written, with more time on my hands, I started the work on a new version of FifoCI — learning from the errors of the first version. The so called FifoCI v2 emphasized stability and UI in order to provide results that have a good signal to noise ratio. The project got a few more contributions on the UI side from rmmh, and has evolved into a really useful platform during this month of January.

One of the most recent features of FifoCI is an integration with Amazon's cloud services, more specifically EC2. While doing rendering tests on Mesa3D's software renderer provides some useful values, it is also interesting to see results from rendering on actual GPUs. Unfortunately, finding servers with GPUs is near impossible. Enter EC2, which allows us to rent servers on either Linux or Windows with real Nvidia GPUs, for prices as low as $0.065/hour. After a week-end of integration work, FifoCI now has the capability to run tests on EC2 Linux g2.2xlarge instances in a cost effective way (through some basic request batching), showing us diffs from problems only impacting Nvidia GPUs.

We are now working to extend that system to automatically test Dolphin on Direct3D through EC2. Even though it has only been a few weeks since FifoCI became really useful, a few regressions in pull requests have already been found before they were merged, demonstrating the power of such a system.

FifoCI showing a code patch fixing a lighting bug.

Conclusion¶

While all this infrastructure work has been for most of the time a single person project, it has been extremely interesting to write all these systems and everything that make them work together. It's not very shiny, and in the end most users of the emulator don't directly care, but I would argue that having a proper development infrastructure for the project has been one of the main reasons behind its success during the last few years. Programs are never perfect, but they sure are better than humans at a lot of tasks. Dolphin still relies a lot on manual QA, but we hope that at some point our infrastructure will become good enough to detect most ordinary problems before wasting anyone's time.

All of the systems we have described in this article are open source. Feel free to read more details on GitHub: sadm (general system administration components) and fifoci (FifoCI specific pieces). And, of course, patches welcomed!